Measuring AI-driven recruitment in the same way you measure human-led recruitment is wrong. Not because AI is different, but because measuring "AI performance" is not a goal in itself.

When you delegate recruitment tasks to AI tools or agents, what matters is understanding whether that decision is paying off. Whether the outcomes are the same, better, or worse than when humans were doing the work.

That requires a measurement framework that connects AI activity to the numbers that actually matter: How the agents perform on their own, how they work alongside recruiters and candidates, and whether all of that is moving the right metrics – time-to-hire, quality of hire, and your bottom line.

That's what this AI Impact Framework is designed to measure.

The problem with traditional recruiting metrics

Traditional recruiting metrics still matter. But on their own, they're incomplete.

If time-to-fill increases, you know something has changed. What you don't know is why. It could be poor qualification, broken handoffs, candidate frustration, or recruiter behavior. The metric captures the outcome, not the mechanism.

IT monitoring has the opposite problem. It tells you systems are available, fast, and error-free, but says nothing about whether interactions are useful. A perfectly stable recruitment chatbot can still create a frustrating candidate experience. A voice agent can respond instantly and still misunderstand every candidate question.

Then there's the CX survey layer. Five-star ratings, NPS scores, and feedback forms that capture candidate satisfaction. These give you a signal, but a delayed and incomplete one: They reflect how candidates felt, not what actually happened in the conversation or why.

In AI-driven recruitment, every interaction is captured, every hiring decision is traceable, and every outcome can be linked back to what happened in the conversation. That's what makes a different kind of measurement possible: one that connects the mechanism to the result.

The AI impact framework: Measure the system, not just the outcome

AI doesn't operate in isolation. It sits between candidates and recruiters, shaping how they interact with each other. That middle layer is where most of the value is created or lost – and it's what the AI Impact Framework is built to measure.

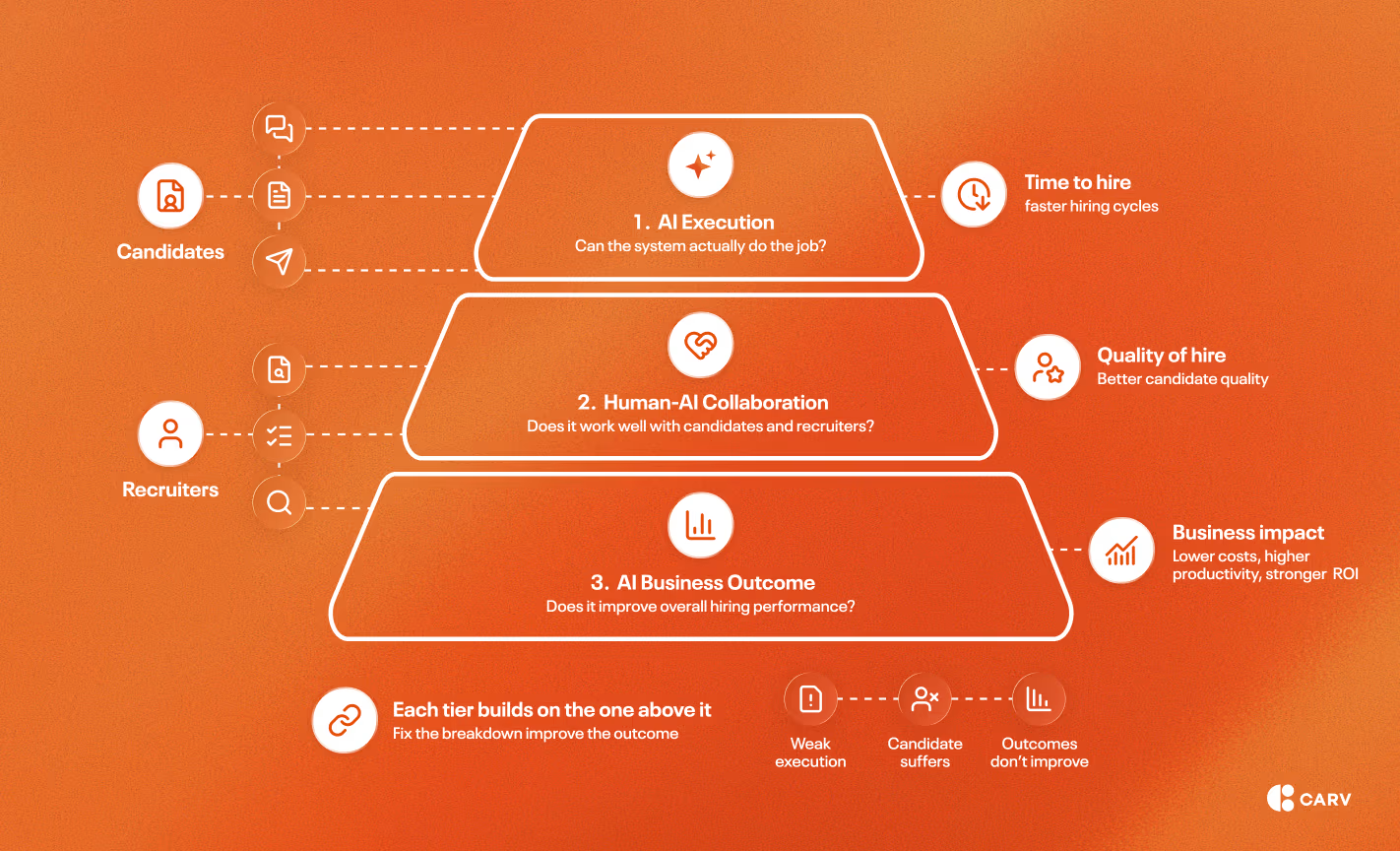

The framework organizes measurement into three tiers:

- AI execution – can the system actually do the job?

- Human-AI collaboration – does it work well with candidates and recruiters?

- Business outcomes – does it improve the overall hiring process performance?

Each tier builds on the one above it. If execution is weak, collaboration suffers. If collaboration breaks down, outcomes won't improve.

That cascade is what makes this structure useful. When results drop, you don't start from scratch but follow the chain and find where the breakdown actually happened.

Let’s explore these tiers one by one.

Tier 1 metrics: AI execution

Is the system doing what it’s supposed to do?

At the foundation of this tier is a simple question: can the AI system reliably complete tasks?

Sourcing candidates, scheduling interviews, updating candidate data, routing applicants, or answering basic questions. This is where many AI implementations quietly fail: Conversations can look smooth while the underlying actions don't actually happen.

Three things matter here:

- Task completion quality measures whether the agent completes what it was asked to do. Think of this as “basic agent competency”. When a candidate asks to reschedule an interview, does the appointment actually get updated? When someone provides a new email address, does it get recorded correctly in the ATS?

- Task adherence measures whether the agent follows through on what it discusses. A conversation can look perfect while the backend quietly fails. Completion quality tells you if the task was done; adherence tells you if it was done when and how it was supposed to be.

- Autonomous operation rate measures the percentage of tasks the agent completes without human intervention. When it encounters something it can't handle, does it escalate properly? Autonomous operation, through AI-driven automation, is what creates efficiency gains. If every task requires human verification or correction, you haven't automated anything; you've just added steps.

All three KPIs are measured on a 1-5 scale, which makes them comparable across capabilities and over time. A score of 5 on task completion but 3 on autonomy tells you the agent is doing quality work but escalating too often. That's a specific problem with a specific fix, which is exactly the point of measuring this way.

Tier 1 is necessary but not sufficient. An AI agent can be technically flawless and still deliver a poor experience. That's where Tier 2 comes in.

Tier 2 metrics: Human-AI collaboration

Is the system working for humans?

This is the layer most organizations skip entirely. Tier 1 tells you whether the AI is working. Tier 2 tells you whether it's working well for the people it's supposed to serve.

There are two sides to this:

- How the AI interacts with candidates, and

- How it collaborates with recruiters.

Both matter. If one breaks down, the whole system underperforms regardless of how good the other side is.

Candidate interaction

Strong performance here isn’t about sounding “human.” It’s about being useful, flexible, and responsive to context.

That shows up in several ways:

- Conversation quality measures whether interactions feel natural and helpful rather than robotic. This isn't captured well by post-interaction surveys. By the time a candidate fills one out, the signal is already diluted. A better approach is to analyze actual conversation behavior: Are candidates asking follow-up questions, sharing context voluntarily, and using natural language? Or are they giving short answers, abandoning conversations, and immediately requesting a human? Conversation behavior tells you more than a star rating.

- Channel awareness measures whether the agent is using the right medium at the right time. A well-designed agent adapts to candidate context, offering a call when someone is available to talk and defaulting to async when they're not. Systems that are locked to a single channel regardless of context will consistently underserve candidates in ways that don't show up in completion rates.

- Decision quality measures whether the agent is making or supporting decisions appropriately for the role. In high-volume talent acquisition contexts, agents often make autonomous qualification calls. In more complex roles, they provide analysis for a recruiter or hiring manager to act on. Either way, the question is the same: is the judgment sound, and can recruiters trust it?

- Candidate control measures how often candidates take the conversation in their own direction, asking questions before answering, changing topics, requesting different roles, and modifying appointments. In a rigid chatbot that doesn’t use AI, this breaks the system. In a well-designed agent, it should be common. If candidates never exercise control, it's a signal that the system isn't actually flexible.

Recruiter collaboration

On the recruiter side, the question is simpler: Does the AI make their job easier, or harder? The key metrics here are:

- Handover quality measures whether the information passed to recruiters is complete, accurate, and useful. A poor handover creates rework: recruiters re-screen candidates, ask the same questions again, or make decisions on incomplete data.

- Move-forward rate measures what percentage of candidates handed to recruiters actually advance. If that number is low, the AI is either screening poorly or failing to pass the right information. It's the clearest signal of whether the handover is actually working.

- Scheduling intelligence measures whether the AI is optimizing interview timing in a way that actually serves the hiring process. Speed between application and interview matters more than most teams realize; delays don't just slow things down, they lose candidates. A well-functioning agent monitors team capacity in real time and flags constraints before they become workflow bottlenecks.

- Decision support quality measures whether the analysis and context the agent provides to recruiters is trusted and actionable. If recruiters ignore it and verify everything themselves, the time savings disappear. If they rely on it, they can focus their time on judgment and relationship-building rather than information gathering.

When both sides of Tier 2 are working, the system creates a genuine handoff: candidates arrive at recruiters engaged and well-screened, and recruiters have what they need to move to the next initiatives or process stages quickly.

When either side breaks down, Tier 3 metrics suffer, and the cause is hard to diagnose without this layer in place.

Tier 3: Business impact KPIs

Does the AI system actually move the needle?

Tier 3 is where AI performance connects to the metrics that matter to the business. These KPIs show whether delegating recruitment work to AI is actually paying off and supporting the decision-making process.

Three metrics are relevant in this sense.

- Time of the process measures the total elapsed time from application to hire. This is the number stakeholders track, and in staffing and RPO contexts, it directly affects revenue. Slower processes mean lost candidates, delayed starts, and unfilled roles that cost clients money. When Tier 1 and Tier 2 are working, this number drops because screening, scheduling, and coordination happen without the delays that accumulate in human-led processes.

- Time spent on the process measures recruiter hours invested per hire. This is the efficiency story. AI doesn't just make the process faster, but shifts what recruiters spend their time on. The result is that the same team can handle more volume or handle the same volume with less effort.

- Candidate utilization measures how much value is extracted from the total applicant pool. In traditional selection processes, a candidate who doesn't fit the target role is a lost candidate. In an AI recruitment process, that same candidate can be routed to alternative roles in the same interaction – before they disengage, before they find another job, before the moment passes. Utilization matters because candidate acquisition is expensive, and a well-engineered AI system can dramatically reduce the cost-per-hire while improving candidate quality.

What ties these three together is traceability.

A faster process is only a useful insight if you can explain what drove it: autonomous scheduling, 24/7 availability, fewer handoff delays. Lower time spent per hire only means something if you can connect it to specific Tier 2 improvements in handover quality or screening accuracy.

That traceability is what turns a result into something repeatable, and what makes the difference between knowing that AI is working and knowing why.

Real-World Example: The ManpowerGroup Talent Solutions Case

This framework for measuring AI agent performance and the effectiveness of AI investments isn't just theoretical. It's been validated in production at one of the world's largest staffing organizations: ManpowerGroup Talent Solutions.

You can watch our recent webinar below.

.jpg)

Putting it into practice

You don't need a perfect setup to start with AI-powered recruitment. Trying to build the perfect system upfront usually slows AI adoption down, as it overwhelms both TA and IT professionals.

Instead, here’s what to do:

- Start with baselines. Understand current performance across your Tier 3 metrics, and manually sample a set of recent interactions to get an early read on collaboration quality. This will keep your approach data-driven and ensure you have reliable benchmarks in place.

- Apply the methodology to one program first. A single role, team, or client. Don't try to transform everything at once.

- Stabilize execution before anything else. Experience and outcomes won't improve reliably until Tier 1 is solid.

- Add collaboration metrics early. Even simple proxies – move-forward rate, manually scored sentiment – are enough to surface issues before they show up in business outcomes.

- Review weekly, at least at first. To correctly evaluate the effectiveness of AI, look at scores and real interaction examples side by side. Numbers tell you what changed; examples tell you why.

- When something looks off, trace it before you fix it. Identify which tier the issue lives in, adjust at the source, then measure again.

- Scale once patterns are clear. Expand to similar use cases before moving to more complex ones.

The underlying shift is treating AI as part of the operating model rather than a one-time deployment. Performance evolves, and measurement needs to keep pace.

AI only works when the system does

AI doesn't fall short in recruitment because the technology isn't capable. It falls short because the system around it isn't measured properly.

If you only look at outcomes, you're always reacting. If you only look at technology, you're missing the point. This framework connects both – execution, interaction, and business impact – so you can see not just whether AI is working, but why, and what to do when it isn't.

That's how AI stops being a feature and starts becoming an operating advantage.

%20(1).avif)